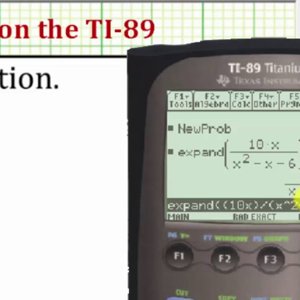

If we want to get the derivative \(\frac\), the derivatives of \(e\) with respect to both inputs. In the above diagram, there are three paths from \(X\) to \(Y\), and a further three paths from \(Y\) to \(Z\). When one node’s value is the input to another node, an arrow goes from one to another. To create a computational graph, we make each of these operations, along with the input variables, into nodes. To help us talk about this, let’s introduce two intermediary variables, \(c\) and \(d\) so that every function’s output has a variable. There are three operations: two additions and one multiplication. For example, consider the expression \(e=(a b)*(b 1)\).

Computational GraphsĬomputational graphs are a nice way to think about mathematical expressions. And it’s an essential trick to have in your bag, not only in deep learning, but in a wide variety of numerical computing situations. The general, application independent, name is “reverse-mode differentiation.”įundamentally, it’s a technique for calculating derivatives quickly. In fact, the algorithm has been reinvented at least dozens of times in different fields (see Griewank (2010)). That’s the difference between a model taking a week to train and taking 200,000 years.īeyond its use in deep learning, backpropagation is a powerful computational tool in many other areas, ranging from weather forecasting to analyzing numerical stability – it just goes by different names.

For modern neural networks, it can make training with gradient descent as much as ten million times faster, relative to a naive implementation. Backpropagation is the key algorithm that makes training deep models computationally tractable.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2022

Categories |

RSS Feed

RSS Feed